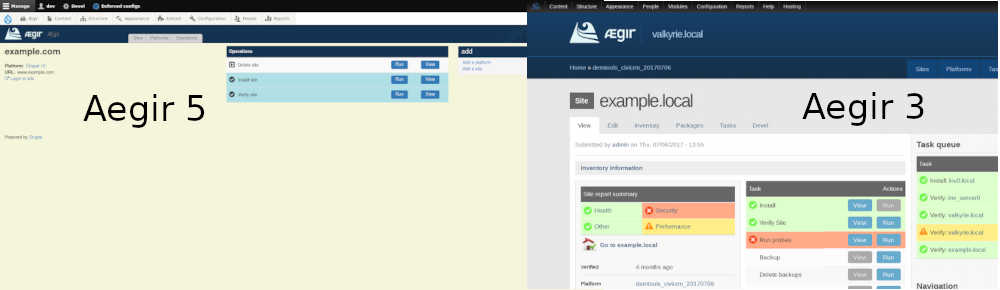

Aegir3 -> Aegir5

Deployment Targets, Projects, Releases, and Deployments

In Aegir3, Servers only have a “verify” task. This ensures that the front end can connect to the server over SSH. The new model will introduce the analogous Deployment Target entity. This will work similarly, ensuring that the front-end can connect to the Kubernetes cluster or VM.

Platforms, for their part, generally have two tasks in Aegir 3: verify, and migrate sites. The Verify task largely is responsible for deploying the platform, as well as ensuring proper file permissions and so forth.

Aegir5 represents a notable departure in this area. We’ve introduced two different entities to represent different aspects of what a Platform does in Aegir3.

First, we have Projects, which are effectively a codebase- a git repository somewhere with an application that’s under development, evolving and improving.

On top of that is a Release, which in the Kubernetes backend amounts to building a new container image. This represents a snapshot of the Project at a specific point in time: a version of the application, all its dependencies, and the runtime environment config needed to run it.

In the short-term, our focus is on this cloud-native use-case, but the idea of Projects and Releases is applicable even in a more traditional VM-based scenario. At the infrastructure level, Kubernetes clusters is the current target, but Aegir will support a more traditional Server as well, allowing Projects to be deployed onto varying infrastructure models.

In Aegir3, Sites have a CRUD suite of tasks that include installing, verifying, upgrading and deleting. In Aegir5, this will be represented by Deployments, into which we can deploy, update and delete the application. From the front end, this will look almost identical, but of course in the Kubernetes context these represent a running instance of the application: a container image of a particular Release, on a given Cluster.

There is one more important distinction at this level. Aegir3 relies extensively on Drupal’s multisite capabilities. With Aegir5, we are setting aside that functionality almost entirely. Instead, we treat each site as standalone. This allows for support of large sites with complex development workflows. However, we can also track which sites use a given image. As a result we can (with a single operation) trigger migrations/updates for all the instances that are using that image.

Tasks, Operations, and the Queue System

Aegir3 relies heavily on a bespoke task queue. As described elsewhere, these Tasks have become Operations in Aegir5. They already have a log of the backend output. Operations in turn are composed of Tasks, which in Aegir5 are more granular, configurable steps in a larger whole. For example, writing a virtual host configuration, or setting up a HTTPS certificate.

As discussed, we’ve modernized the task queue itself to use Celery, which is built atop RabbitMQ. This stack replaces the bespoke implementation and unlocks a more distributed “control plane” for the Aegir system.

In addition to the task queue, Aegir3 also includes queues for running cron, taking scheduled backups, and so forth. Celery is certainly capable of implementing such queues. However, these are not an immediate priority.

One feature from Aegir3 that we would like to incorporate is the ability to retry failed tasks. However, this may not make sense in a Kubernetes context.

Operational workflows

Backup and restore are important tasks for Aegir. In the long run, Aegir5’s Kubernetes backend will probably want to do volume snapshots using a tool like Valero.

However, to keep things simple to start, we will operate similarly to how we do this in Aegir3, using an SQL dump and file tarball, a model which has served us well all these years.

Cloning a site, in the long run, will be a composition of deploying from a snapshot/backup, taken immediately. Here again, the composition of this kind of operation becomes very similar to Aegir3. However, we can also simply deploy a new site, and then synchronize the database and files from the source site.

Disabling a site will be a matter of running a job that rewrites the vhost to point to a static page, similarly to how Aegir3 does it now.

Additionally, the password reset functionality is much improved, by simply providing a “login to site” button.

URL aliases and HTTPS capabilities are both already handled within the Kubernetes backend via the ingress and certificate manager services. These will be exposed via the Drupal UI as configuration options when creating an Deployment to deploy a Release.